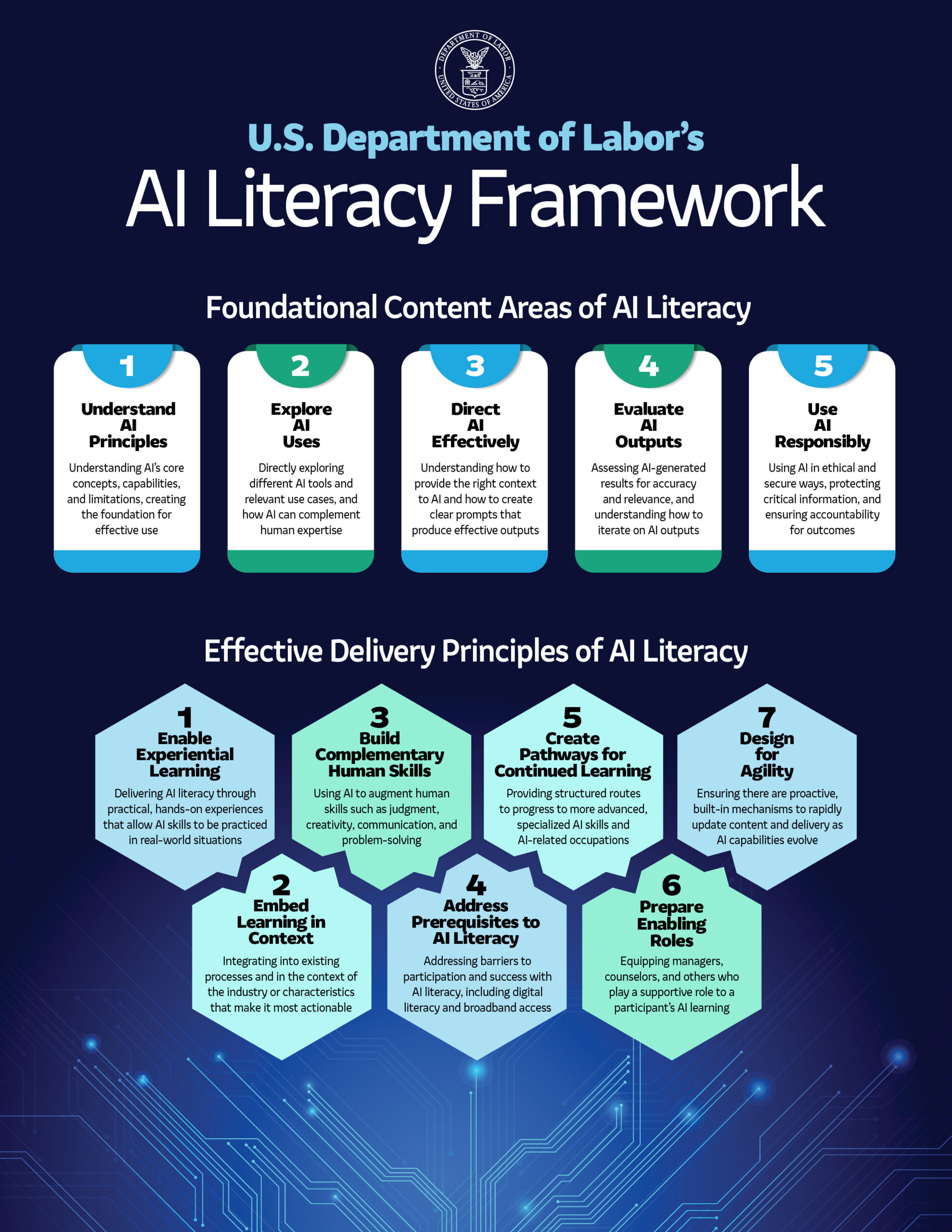

You’ve probably seen the Department of Labor’s new AI Literacy Framework making the rounds on LinkedIn this week. Here it is.

Image source: U.S. Department of Labor, https://www.dol.gov/newsroom/releases/eta/eta20260213

I’ve read the whole thing — not just the one-pager, but the full Training and Employment Notice with all its attachments and references. My honest reaction: Claude could have generated this in seven seconds if you prompted it to “create an AI literacy framework that sounds like it came out of a government committee.”

That’s not a cheap shot. That is actually the problem I want to talk about.

What You’re Looking At

The framework tells workforce boards, community colleges, and employers to teach AI literacy across five areas: understand AI principles, explore AI uses, direct AI effectively, evaluate AI outputs, and use AI responsibly. It then lays out seven principles for how training programs should deliver this content — experiential learning, embedding learning in context, building complementary human skills, and so on.

If you work in this space, none of that will surprise you. You already know workers need to understand what AI can and can’t do, that prompting matters, that outputs need verification, and that responsible use requires guardrails. The framework describes the table stakes and stops there.

And the framework is explicit that it doesn’t mandate anything. No specific curricula. No regulatory requirements. It’s voluntary guidance — a resource for program design at an extremely high level. So what you’re actually looking at is a document that tells the American workforce system to teach AI literacy without telling anyone what AI literacy looks like when it’s working.

The Speed Problem

Here’s where I want to push on this, because the speed issue isn’t theoretical for us. We build and maintain AI governance and literacy curricula at Proceptual. Not frameworks about curricula — actual course materials that actual people sit through and then go back to their jobs and use.

In the last two months alone, we’ve had to make significant revisions because of the release of Claude Opus 4.6 and OpenAI’s Openclaw. These weren’t cosmetic updates where we swapped a few screenshots. The capabilities in those releases changed how we teach people to evaluate AI outputs, how we frame the conversation around AI agents and autonomous workflows, and what “directing AI effectively” even means when the tools can now search the web, cite sources, and execute multi-step tasks on their own.

Now look at the DOL’s timeline. The framework references guidance letters from July and August 2025. It builds on an executive order from April 2025. The framework itself dropped on February 13, 2026. That’s roughly ten months of development — stakeholder input, interagency coordination, alignment with the Department of Education, legal review — during which the AI landscape shifted multiple times in ways that directly affect what “AI literacy” should include.

The framework tells training providers to teach workers about “hallucinations and accuracy limits.” That’s genuinely important. But the latest generation of tools has dramatically reduced hallucination rates, introduced web search and citation capabilities, and started producing outputs that look very different from what workers encountered even six months ago. The judgment calls are different now. The failure modes are different. The specific things you need to watch for when evaluating an AI output have changed in ways that matter for the person sitting at a desk trying to decide whether to trust what Claude or ChatGPT just told them.

Government frameworks are built through a process designed for stability — and that process works well for labor law, workplace safety standards, benefits administration. Things that change on the scale of years, not months. That same process is fundamentally incompatible with a technology where the ground shifts every quarter.

Governance Theater

Here’s what concerns me most, and it’s something I see with clients all the time. The framework makes it remarkably easy to feel like you’re doing something about AI without actually changing anything about how your organization uses AI.

I had a client last year — mid-sized professional services firm — that checked every governance box you could imagine. They formed an AI steering committee. They wrote an acceptable use policy. They ran a half-day training session called “AI Literacy Fundamentals.” Leadership felt great about it. And when I actually sat down with their teams and asked how they were using AI day to day, nothing had changed. The people who were already using ChatGPT kept using it the same way. The people who were afraid of AI were still afraid. The training was so generic that nobody could connect it to their actual work.

The DOL framework enables this pattern at a national scale. A state workforce board can point to the five content areas, fund some broad AI literacy programming through WIOA, report to Washington that they’re addressing the skills gap, and nobody has to ask the harder question: Did any worker actually get better at their job because of this?

Productive control of AI requires specificity that this framework deliberately avoids. It requires knowing which tools your people use, what data flows through those tools, what decisions are influenced by the outputs, and what happens when something goes wrong. A healthcare system implementing AI-assisted diagnostics needs guidance on validating outputs against clinical standards and documenting AI-assisted decisions for regulatory purposes. A manufacturing company deploying AI quality control needs to understand specific failure modes and calibrate human oversight for their production environment. “Use AI responsibly” doesn’t get anyone closer to solving those problems.

What I’d Actually Recommend

If I were advising the DOL — and I genuinely would welcome that conversation — I’d push for a few things alongside this framework.

First, fund pilot programs with measurable outcomes. Pick 20 industries. Fund specific AI literacy training programs in each one. Measure what actually moves the needle on worker productivity, error rates, and responsible use. What does effective AI training look like for a warehouse worker versus a nurse versus a paralegal? We don’t know yet, because nobody has run the experiments at scale. No amount of framework publishing will generate that data. Only implementation will.

Second, require specificity from anyone using federal workforce dollars for AI training. “We taught workers about AI principles” shouldn’t satisfy a WIOA reporting requirement. “We trained medical billing staff to use AI-assisted coding tools and reduced claim rejection rates by 15%” should. Tie the funding to outcomes, not to content area coverage.

Third, put a real update cadence on the framework. The document says it will “evolve over time based on stakeholder input, advances in AI capabilities, and changes in labor market dynamics.” Fine. But put a date on it. Quarterly updates, or at minimum every six months. If the DOL can’t turn around framework revisions on that timeline, it tells you everything about whether this process can keep pace with the technology.

And fourth — this is the big one — acknowledge that AI literacy without AI governance is incomplete. The framework barely touches organizational governance, risk management, or the regulatory landscape. You can train every worker in America to use AI effectively, and if the organizations they work for don’t have governance structures, data handling policies, and vendor management processes in place, you’ve just created a more skilled workforce that’s still generating compliance risk. Literacy and governance have to move together. Teaching one without the other is like teaching someone to drive without explaining traffic laws.

About the Author

John Rood is the founder of Proceptual, where he helps organizations build practical AI governance systems that actually work. He has taught AI governance at Michigan State University and the University of Chicago, and his writing has appeared in HR Brew and Tech Target. He has spoken at the national SHRM conference and works with organizations ranging from startups to private equity portfolio companies on AI implementation and governance.