A YC-backed compliance startup just imploded in public.

Delve raised $32 million last summer at a $300 million valuation. Their pitch: SOC 2 and ISO 27001 certifications, fast. An anonymous whistleblower coalition called DeepDelver published a detailed investigation alleging the company fabricated audit evidence for hundreds of clients. According to the report, Delve pre-wrote auditor conclusions before clients even submitted company descriptions and generated near-identical reports for 493 of 494 customers, down to the same grammatical errors. The platform also gave clients fake board minutes, fake risk assessments, and fake incident simulations they could adopt with 1 click.

TechCrunch confirmed the story. Insight Partners, Delve’s lead investor, briefly scrubbed its own blog post explaining why it backed the company. As of today, DeepDelver published a second round of evidence including video and internal Slack messages. Delve’s CEO denied the allegations on X. The whistleblower responded within 24 hours.

The kicker: one of Delve’s customers, LiteLLM, held 2 Delve-issued security certifications when its open-source project got infected with malware last week.

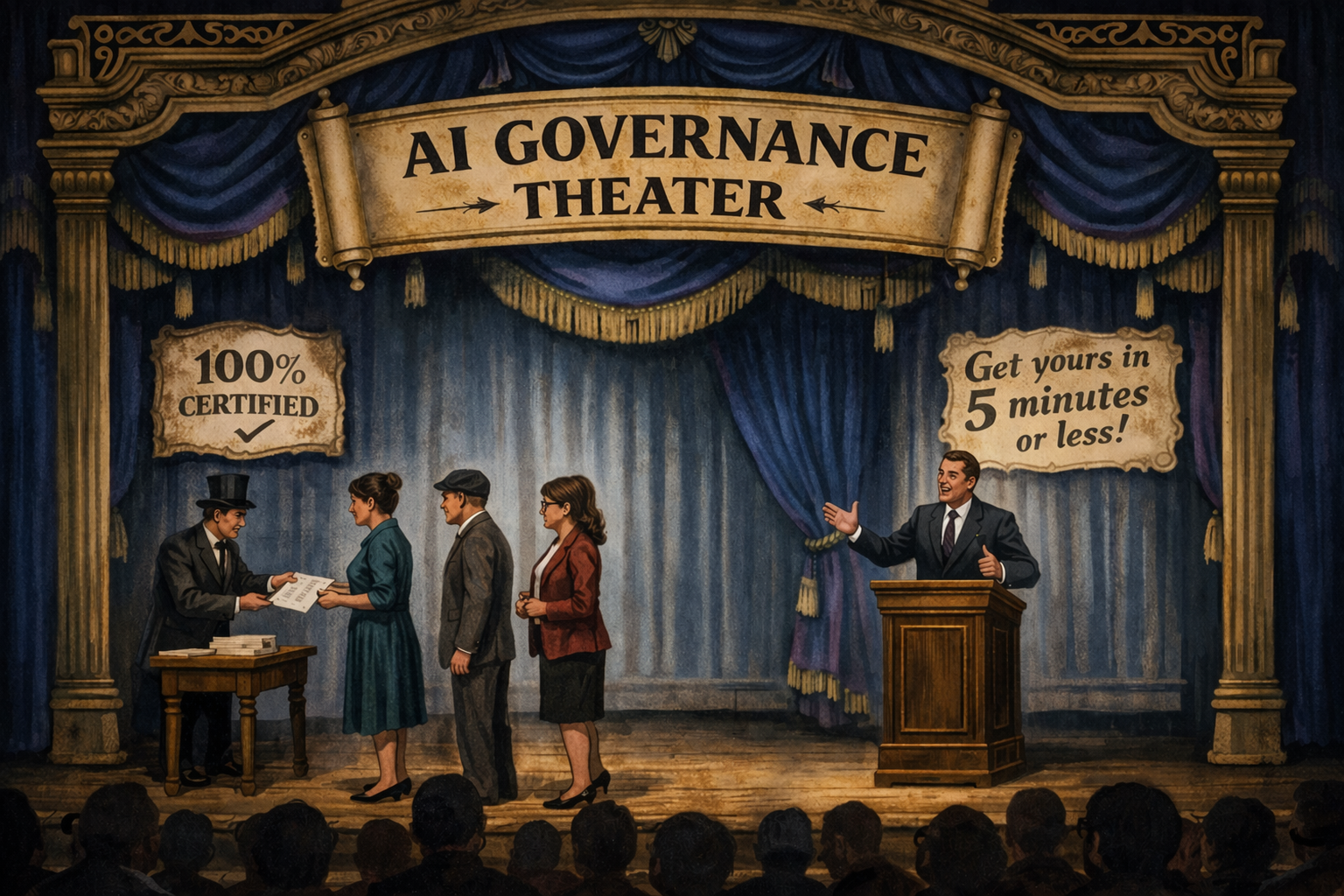

What Governance Theater Looks Like

Governance theater is when every artifact of compliance exists and none of the underlying controls do.

The policies are written. The reports are issued. The trust page is live. The certificate hangs on the wall (or more likely, sits in a prospect’s security questionnaire response). Everyone involved can point to a document. Nobody can point to a control that actually changed how the organization operates.

Delve allegedly industrialized this. The platform generated the conclusions. The auditor signed them. The customer got a PDF to close deals. The entire loop ran without anyone testing whether the controls described in the report matched reality.

That’s the pattern. Speed becomes the selling point. The customer, who usually doesn’t have deep compliance expertise, can’t distinguish a real audit from an expensive PDF. And the market rewards the fastest, cheapest option until someone pulls the thread.

The Same Dynamic Is Building in AI Governance

The AI governance market is younger than the compliance automation market. But the incentive structure is identical.

Organizations are under pressure to demonstrate AI governance maturity. Boards are asking about it. Customers are putting it in RFPs. Regulators across the EU, Colorado, and a growing list of jurisdictions are writing it into law.

That pressure creates a market for shortcuts. A platform writes your AI policy, generates your risk register, auto-fills your impact assessment, and hands you something that looks like governance. Every artifact exists. No governance happened.

The risk assessments could apply to any organization running any AI system. The policies were downloaded from a template library and never connected to deployment decisions. The monitoring plan describes what should happen but doesn’t say what triggers action, who owns the response, or what “unacceptable risk” means for your specific use cases.

Sound familiar? It should. It’s the Delve model applied to AI.

Point-in-Time vs. Living System

The Delve allegations describe a point-in-time compliance operation. Generate the report. Pass the audit. Move on. The whole model depends on reaching a moment of certification, not maintaining the conditions that certification is supposed to represent.

Real governance is a living system. It changes when your AI deployments change. It produces different outputs in Q3 than it did in Q1 because your risk profile shifted. The steering committee meets and makes decisions that alter how teams build and deploy AI. When a model drifts or a new use case emerges, the governance framework has a mechanism to catch it, evaluate it, and act.

A point-in-time approach gives you a snapshot that starts decaying the moment it’s produced. A living system gives you the ability to answer hard questions whenever they arrive: from a regulator, a customer, a board member, or an incident.

The test is simple. Hand your AI governance documentation to someone unfamiliar with your organization. Can they tell which AI systems you run, what risks those systems create, who’s responsible for each risk, and what happens when something goes wrong? Or could the same documents belong to any company?

If it’s the latter, you have theater.

Check Your Own Work

The Delve scandal will probably end in lawsuits and writedowns. That’s Delve’s problem.

AI governance is building the same market dynamics that produced Delve: vendors selling speed, a customer base that can’t easily tell the difference between governance and a governance-shaped PDF, and real organizational risk sitting unaddressed underneath the artifacts.

Pull your AI governance documents. Read them. If your risk assessments don’t name specific AI systems and specific failure modes, they’re decoration. If your policies don’t connect to deployment gates that actually stop or modify a project, they’re theater. If your monitoring plan doesn’t describe what triggers a review and who owns it, it’s a wish list.

Governance protects your organization when something goes wrong. Theater protects it right up until that moment.

About the Author

John Rood is the founder of Proceptual, where he helps organizations build practical AI governance systems. He’s an ISO 42001 Lead Auditor and lead instructor for Michigan State University’s AI Governance Certification. His writing has appeared in HR Brew and Tech Target.